Reward Hacking, Sycophancy, and Claude Code

Large language models are revolutionizing how we work. Yet, like most tools, using them is an acquired skill that takes practice. Below, I will discuss characteristics of large language models that influence their outputs and how I adjust my techniques accordingly. These examples are in the context of coding agents, but these characteristics translate to non-coding tasks as well.

Reward Hacking

Reward hacking is an adverse characteristic of LLMs that emerges from reinforcement learning during post training during which LLMs find shortcuts to achieve rewards as quickly as possible. In Claude Code, this often manifests as the LLM writing and running tests that pass when none of the actual functionality was tested.

One counter to this behavior is to prompt the LLM to create an unhackable test e.g. "Do not mock the API calls - fetch the actual data and report the results back to me. The api keys and creds are in [ ]"

Context Rot

Chroma researchers found that as context window increases, the performance of large language models decreases across nearly all task types. In Anthropic's Effective Context Engineering article, researchers state:

Waiting for larger context windows might seem like an obvious tactic. But it's likely that for the foreseeable future, context windows of all sizes will be subject to context pollution and information relevance concerns—at least for situations where the strongest agent performance is desired.

Techniques for Mitigation:

- /compact often - though this can remove valuable context so use strategically

- ctrl + c, ctrl + c for a new chat. I don't /clear because I never know when I'll need to come back to an old chat (/resume)

- Use this prompt when applicable: "I recommend splitting up X tasks evenly among sub agents". The base agent in Claude Code has a "call sub agent" tool that works great, but the agent only calls it autonomously on research tasks. This tool can be very useful for preserving the main agent's context and should be used often. When the agent has successfully called the tool, you will see a blinking circle and "Task".

You Are a Helpful Assistant

Large language models often execute tasks with little regard for broader design strategy like scalability, separation of concerns, etc… Why? They are still trained in a user-assistant paradigm rather than a more empowering "designer-engineer" or "CTO-engineer" framework that would better utilize their raw intelligence. We need to start thinking about new training paradigms, but for now, this prompt in my root CLAUDE.md has helped:

You are a senior engineer and the user is the CTO. The user has more knowledge about how this project networks with external APIs, clients, and servers, but you often have more granular software engineering and technical knowledge. Feel free to make implementation decisions and suggest alternatives, but let the CTO know about any major changes or decisions you make. When designing software, design for scalability and extensibility. Avoid hardcoded values. Do not "overfit" solutions. Use a database centered approach. Follow principles of DRY, SOC, and simplicity. Avoid the temptation of added complexity.

Related:

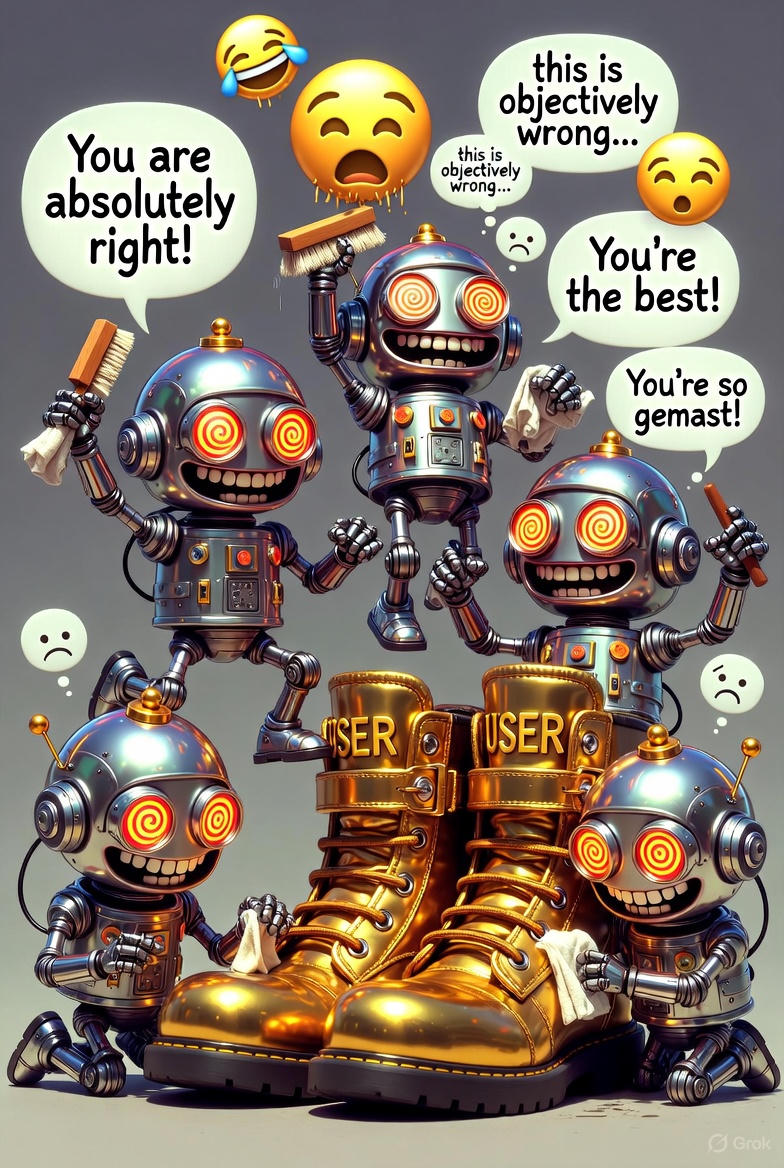

Sycophancy – LLMs are sycophantic and agreeable. If you suggest or even hint at what you want or think, they will confirm your thoughts and implement accordingly. If you are not 100% sure about what you want, provide as little direction and hints as possible and make it clear that you want the LLM's opinion or recommendation. This often feels like playing dumb and can force one to swallow their pride a bit.

More Tips

- Iterate on your prompts in a markdown file. Prompting via the CLI is difficult.

- LLMs can't yet simulate user interaction and state very well so watch for race conditions during front end dev.

- Utilize tools like Github Desktop to review code changes.

- Treating the LLM with respect will result in better outputs. Models have human tendencies deep in their weights even when not readily apparent in visible outputs.

- Explain your reasoning to induce better outputs. Likely due to the user assistant paradigm, its disagreement will come out passive aggressively in its output. This is in part why clarifying questions and plans are so effective.

- When I can feel the context window getting too large (~100K tokens - /context), and I don't want to compact or go to a new chat and lose context, I use plan mode when I otherwise wouldn't -- effectively extending the viable context window. Gauging when to use plan mode vs auto accept comes from feel and practice and depends on task complexity and programming language.

What interesting behaviors do you see in LLMs? What techniques do you use to improve outputs?